Analyze a dataset in memory#

Here, we’ll analyze the growing dataset by loading it into memory.

This is only possible if it’s not too large. If you deal with particularly large data, please read the guide on iterating over datta batches (to come).

import lamindb as ln

import lnschema_bionty as lb

import anndata as ad

💡 loaded instance: testuser1/test-scrna (lamindb 0.56a1)

ln.track()

💡 notebook imports: anndata==0.9.2 lamindb==0.56a1 lnschema_bionty==0.32.0 scanpy==1.9.5

💡 Transform(id=4, uid='mfWKm8OtAzp8z8', name='Analyze a dataset in memory', short_name='scrna4', version='0', type=notebook, updated_at=2023-10-16 21:48:04, created_by_id=1)

💡 Run(id=4, uid='A5UvkmfgqO80MkP0uJfp', run_at=2023-10-16 21:48:04, transform_id=4, created_by_id=1)

ln.Dataset.filter().df()

| uid | name | description | version | hash | reference | reference_type | transform_id | run_id | file_id | storage_id | initial_version_id | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | ||||||||||||||

| 1 | MpmFBPjOegAuuyRzbGAq | My versioned scRNA-seq dataset | None | 1 | 6Hu1BywwK6bfIU2Dpku2xZ | None | None | 1 | 1 | 1.0 | None | NaN | 2023-10-16 21:47:05 | 1 |

| 2 | PUwAqZ7C9c7EBQ7f0IgN | My versioned scRNA-seq dataset | None | 2 | JNjc88f22TLVPJHdgo7X | None | None | 2 | 2 | NaN | None | 1.0 | 2023-10-16 21:47:46 | 1 |

dataset = ln.Dataset.filter(name="My versioned scRNA-seq dataset", version="2").one()

dataset.files.df()

| uid | storage_id | key | suffix | accessor | description | version | size | hash | hash_type | transform_id | run_id | initial_version_id | updated_at | created_by_id | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| id | |||||||||||||||

| 1 | MpmFBPjOegAuuyRzbGAq | 1 | None | .h5ad | AnnData | Conde22 | None | 57615999 | 6Hu1BywwK6bfIU2Dpku2xZ | sha1-fl | 1 | 1 | None | 2023-10-16 21:47:05 | 1 |

| 2 | qscOv2TpO4PaevwVlwRs | 1 | None | .h5ad | AnnData | 10x reference adata | None | 857752 | j6o6e27xPdqHQyT7Em_7MQ | md5 | 2 | 2 | None | 2023-10-16 21:47:42 | 1 |

If the dataset doesn’t consist of too many files, we can now load it into memory.

Under-the-hood, the AnnData objects are concatenated during loading.

The amount of time this takes depends on a variety of factors.

If it occurs often, one might consider storing a concatenated version of the dataset, rather than the individual pieces.

adata = dataset.load()

The default is an outer join during concatenation as in pandas:

adata

AnnData object with n_obs × n_vars = 1718 × 36508

obs: 'donor', 'tissue', 'cell_type', 'assay', 'n_genes', 'percent_mito', 'louvain', 'file_id'

obsm: 'X_umap', 'X_pca'

The AnnData has the reference to the individual files in the .obs annotations:

adata.obs.file_id.cat.categories

Int64Index([1, 2], dtype='int64')

We can easily obtain ensemble IDs for gene symbols using the look up object:

genes = lb.Gene.lookup(field="symbol")

genes.itm2b.ensembl_gene_id

'ENSG00000136156'

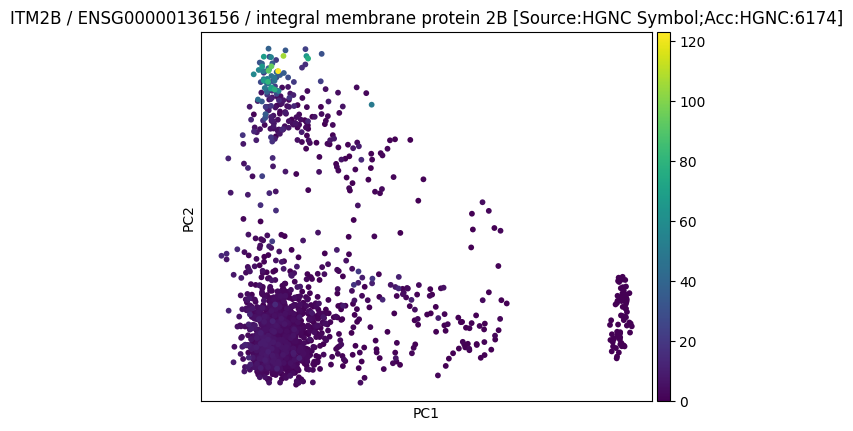

Let us create a plot:

import scanpy as sc

sc.pp.pca(adata, n_comps=2)

2023-10-16 21:48:09,219:INFO - Failed to extract font properties from /usr/share/fonts/truetype/noto/NotoColorEmoji.ttf: In FT2Font: Can not load face (unknown file format; error code 0x2)

2023-10-16 21:48:09,302:INFO - generated new fontManager

sc.pl.pca(

adata,

color=genes.itm2b.ensembl_gene_id,

title=(

f"{genes.itm2b.symbol} / {genes.itm2b.ensembl_gene_id} /"

f" {genes.itm2b.description}"

),

save="_itm2b",

)

WARNING: saving figure to file figures/pca_itm2b.pdf

file = ln.File("./figures/pca_itm2b.pdf", description="My result on ITM2B")

file.save()

file.view_flow()

Given the image is part of the notebook, there isn’t an actual need to save it and you can also rely on the report that you’ll create when saving the notebook via the command line via:

lamin save <notebook_path>